Cherry-Picking in the Endless Orchard of Diversity Studies

The Task Force One Navy Report and the Biases of Social Science Research

The Disappointing Reality of “Evidence-Based” Policymaking

One of the most popular refrains in policymaking circles is the claim that policy should be “evidence-based.” In the abstract, I'm supportive of the idea that our policy frameworks should be constructed using the raw materials of peer-reviewed, empirical research. In reality, however, three problems make me wary about the value of pursuing “evidence-based” policy at all: (1) the research related to most questions of social policymaking is generally biased; (2) most of the conclusions derived from the research come from samples, sectors, and situations that are poor analogs for the ones they are being applied to; (3) there are many studies within the research on a topic that could be cited to provide a justification for doing the exact opposite thing from a policy perspective. In other words, it is not only that “purely evidence-based policy doesn’t exist,” it is that it cannot exist given the current limitations of social science research.

The Task Force One Navy Report

In a series of posts, I’m going to explore these three problems in the context of military policy. More specifically, I’m going to examine the role that empirical social science research has played in shaping the Navy’s “Task Force One Navy” (TF1N) report. The TF1N report is a 142-page report written in the aftermath of the racially-motivated unrest of 2020. As Phillip Keuhlen writes, “The Task Force One Navy Final Report was launched from the twin assumptions that the Navy suffers from systemic racism and that diversity benefits the Navy's military mission.”

In this post, I’m going to examine only one small part of the TF1N and its discussion of diversity: the claim that “diverse teams are 58 percent more likely than non-diverse teams to accurately assess a situation. In addition, gender-diverse organizations are 15 percent more likely to outperform other organizations and diverse organizations are 35 percent more likely to outperform their non-diverse counterparts.” The TF1N cites only two studies to back up these claims: a non-peer-reviewed report based on proprietary (not publicly available) data by McKinsey & Company entitled “Diversity Matters” and a 2014 peer-reviewed study by Levine et al. published in the Proceedings of the National Academy of Sciences of the United States of America entitled “Ethnic Diversity Deflates Price Bubbles.” Below, I will talk about the problems associated with “cherry-picking” these two studies from the endless orchard of biased diversity studies.

The Endless Orchard of Biased Diversity Studies

There are literally thousands of studies published on diversity EVERY YEAR. In fact, it is so difficult to count all of the peer-reviewed studies coming out each year on the topic of diversity and inclusion that peer-reviewed studies are required just to come up with a number. Take, for example, “Literature Review on Diversity and Inclusion at Workplace, 2010–2017.” This article searches a database of 254,617 journals in order to identify articles about “diversity and inclusion” that are “relevant to the field of management.” Despite limiting their search to journals that are “relevant to the field of management,” the authors manage to locate more than 45,000 papers on gender, racial and disability diversity in 2017 alone (see the figure below).

What do these studies have to tell us about diversity? Without even looking at any of the findings from this massive body of work, it is important to point out that not all studies are equally likely to be published in the first place. Why? While the reasons behind “publication bias” are many and varied, I want to highlight one particularly pernicious cause here: a rigidly enforced ideological monoculture within academia.

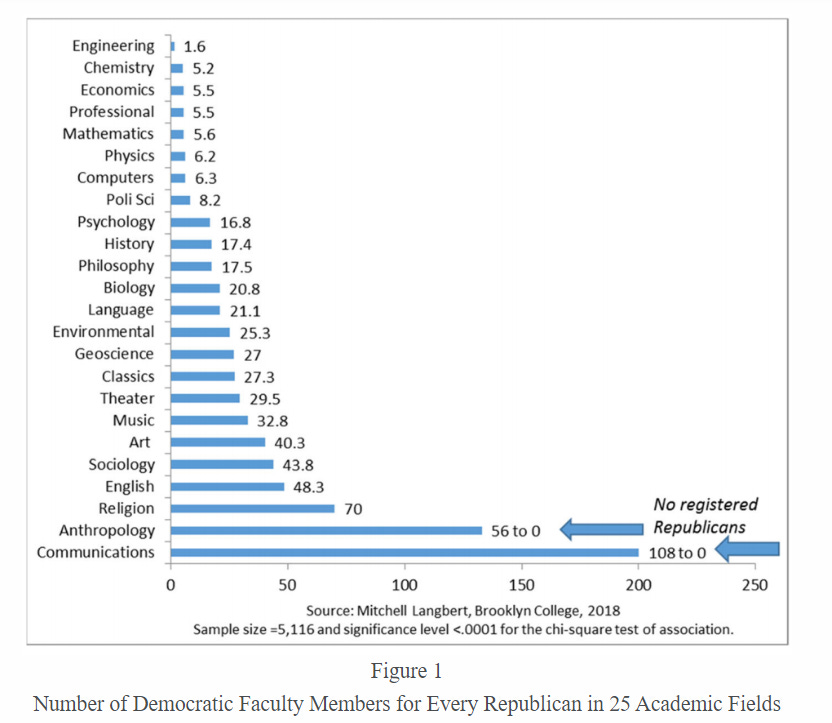

As Langbert shows, there is very little ideological diversity in most academic disciplines. The Democrat to Republican registration ratios range from 2:1 in engineering to 108:0 in communications and interdisciplinary studies.

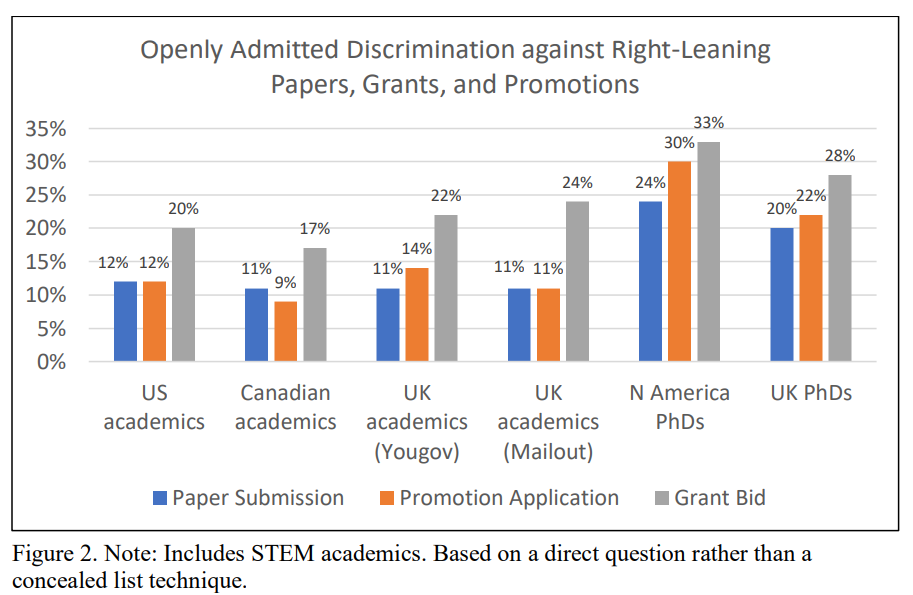

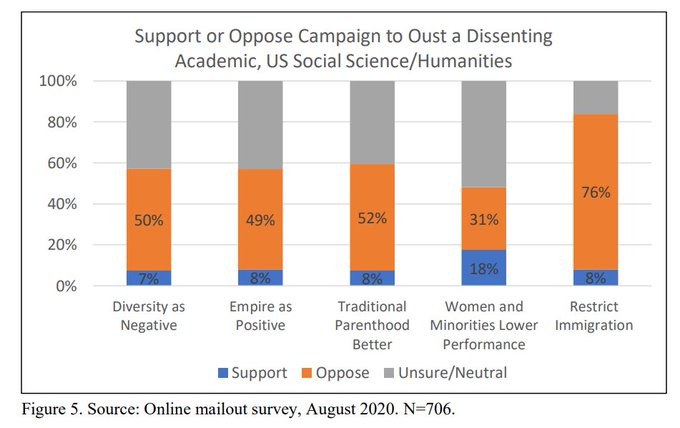

According to political scientist Eric Kaufmann, the problem is not merely the distribution of political beliefs among faculty members. It is also the fact that many academics are willing to discriminate against conservatives and ideological dissenters in hiring, promotion, grants, and publications (see graph below).

This is more than mere talk. As Kaufmann also reports, conservative faculty members in the US were four times as likely as liberal faculty members to report being threatened with disciplinary action for political speech. Given that there are five times as many liberal faculty members, this disparity is striking. Unsurprisingly, conservatives (and those with heterodox views on politically sensitive issues) have picked up on the message sent by their liberal colleagues. Data from Inbar and Lammers (2012) reveal that conservative faculty are far more likely to believe that they will be discriminated against than their liberals counterparts:

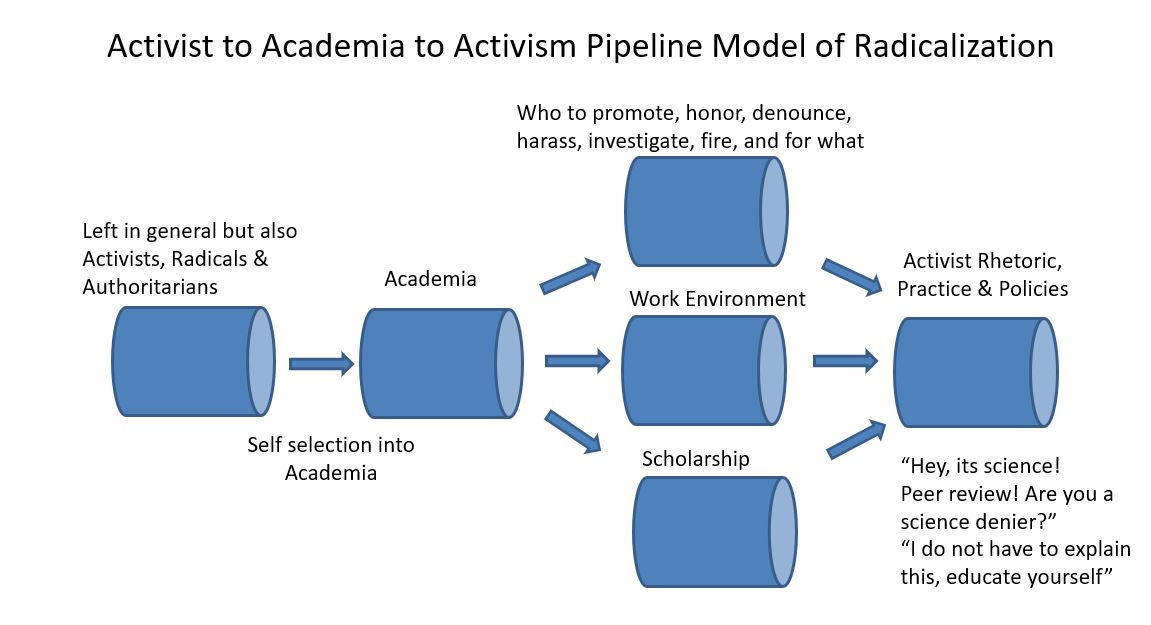

As social psychologist Lee Jussim argues, this combination of ideological homogeneity and politically-motivated discrimination has the potential to detrimentally influence “almost every step of the research process, including but not restricted to what gets studied, funded, and published, how studies are designed and interpreted, and which conclusions become widely accepted within a field.” More precisely, the concern is that the homogeneity and conformity within academia lead to the production and promotion of only those findings that are consistent with left-leaning narratives. Jussim brilliantly captures the essence of these dynamics within academia through the following visualization:

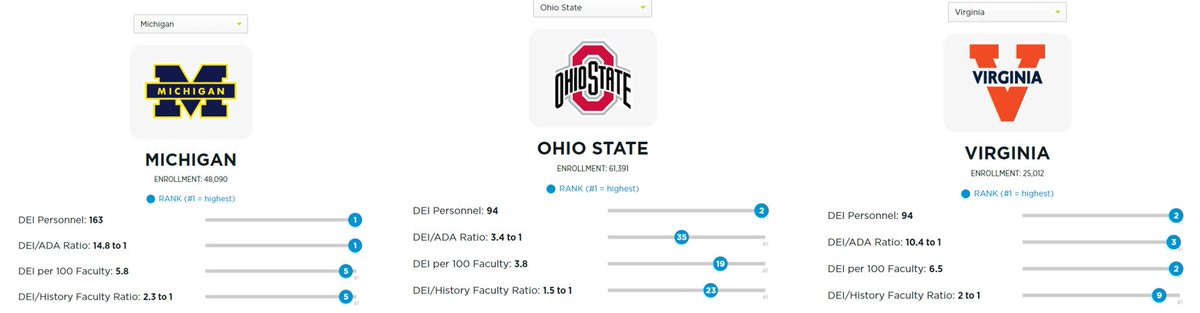

The pressure to produce politically palatable findings is not evenly distributed across areas of research. The demand to come to the “right” conclusion is likely to be greater in the study of diversity than elsewhere. As the faculty data above suggest, the ideological homogeneity increases as the discipline in question becomes more likely to study diversity. More importantly, as Green and Paul argue, “[p]romoting DEI has become a primary function of higher education.” To take just three examples, the University of Michigan, Ohio State, and the University of Virginia have 163, 94, and 94 DEI personnel, respectively. Across American universities, DEI staff now make up an average of 3.4 positions for every 100 tenured faculty.”

This new institutional imperative also shows up in the mandated diversity statements that universities now require in the hiring and promotion processes of faculty members. For just one example, consider the application requirements for a faculty position in the History Department at California State University, Sacramento. Applicants are now required to submit a diversity statement showing, among other things, how the candidate would “advance the History Department’s goal of promoting an anti-racist and anti-oppressive campus to recruit, retain, and mentor students.” CSUS’s requirement is not an aberration.

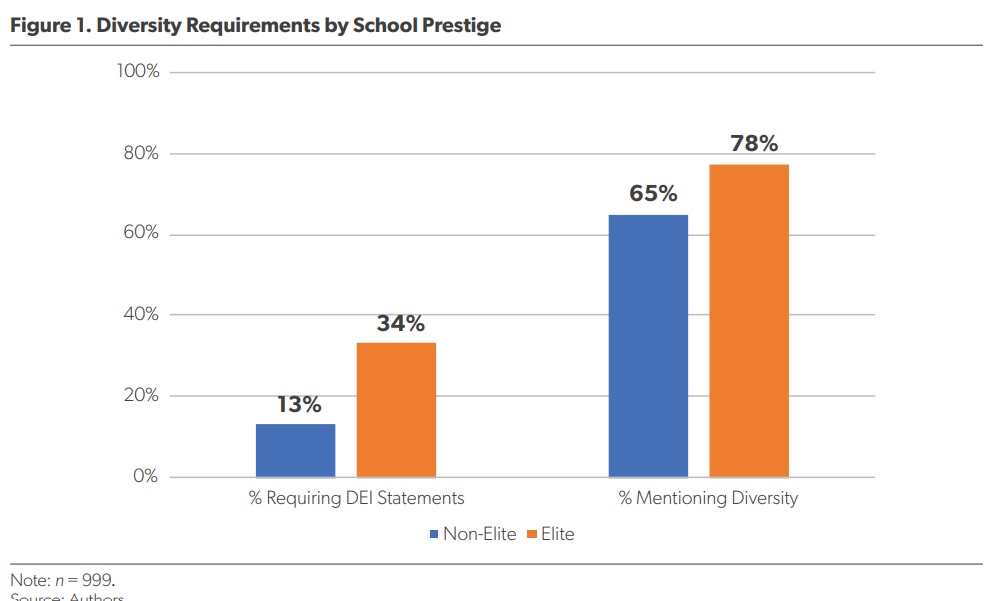

More generally, the American Enterprise Institute recently released a report on the prevalence of DEI statements in university hiring. The report found that 68% of job ads in 2020 mentioned diversity, and 19% required a separate diversity statement. Importantly, the report also finds that “prestigious” universities are significantly more likely to have DEI requirements than “nonprestigious” universities:

As Redstone and Villasenor note, these diversity statements “function as a filter that itself is exclusionary, as it favors faculty and would-be-faculty whose academic research happens to involve activities that can be cited in a diversity statement.”

In addition to this filtering effect, it is important to emphasize the effect that institutional signaling related to diversity is likely to have on research. Given that “prestigious” universities are, by definition, the ones that produce the most research, our understanding of diversity is coming almost exclusively from work produced by people in institutions defined by an ideological commitment to the value of diversity.

Perhaps it is unsurprising in this context that many academics are not opposed to “canceling” those who express dissenting views on the value of diversity. As Kaufmann’s survey of faculty members shows, only half of professors in the social sciences and humanities would oppose a campaign to fire an academic who argues that “diversity is a negative.” Questioning the value of diversity (even if this questioning is motivated by a good faith interpretation of empirical data) has become disqualifying for faculty positions at contemporary American universities.

All of this is just to suggest that the published research is likely to overstate the benefits of diversity while minimizing its costs. Policymakers should, therefore, take the findings of social science research on diversity with an appropriately large dosage of salt. For the rest of us, we should always remember that there is an endless orchard of academic studies available to anyone looking to cherry-pick research affirming the value of diversity.

Cherry Picking for the TF1N

“Evidence-based” policymaking requires honest engagement with all of the best available evidence about the likely costs and benefits of the available policy options. Strategically selecting one or two studies that confirm one’s predetermined policy approach (i.e. “cherry-picking”) is not engaging in “evidence-based policymaking”; it is a disingenuous attempt to deflect criticism by strategically relocating the justification for policy decisions from the realm of politics to the realm of “science.”

Unfortunately, I get the sense that this deflection is exactly what the authors of the TF1N had in mind when citing the McKinsey & Company and PNAS studies. Indeed, if the authors had been concerned at all about making policy on the basis of the best available evidence, they would have discovered that diversity’s relationship to performance is actually far more complicated than what is communicated by the toplines of these two reports. Contrary to the claim that “gender-diverse organizations are 15 percent more likely to outperform other organizations,” a number of meta-anaylses have found null, mixed or negative effects for gender diversity. In a review of 20 peer-reviewed studies on 3097 companies Pletzer et al., for example, conclude that “the mere representation of females on corporate boards is not related to firm financial performance if other factors are not considered.” More recently, in a 2021 review, Yu and Madison write “that quotas for women on corporate boards have mainly decreased company performance.”

The claim that racially and ethnically “diverse organizations are 35 percent more likely to outperform their non-diverse counterparts” also runs contrary to numerous studies. For example, Filbeck et al. (2017) find no difference in financial performance between companies on DiversityInc’s list of Top 50 Companies for Diversity and those on the S&P 500 index. More generally, numerous peer-reviewed studies show that racial and ethnic diversity can complicate public policy decisions, increase workplace conflict, and reduce social connectedness. In a meta-analysis of 87 studies on the relationship between ethnic diversity and social trust, Dinesen et al. (2020) conclude that there is “a statistically significant negative relationship between ethnic diversity and social trust across all studies.”

Most importantly for our purposes here, the authors of the PNAS study themselves are incredibly explicit about the potentially high costs of diversity. In fact, the entire theoretical justification for their work is that observable racial and ethnic diversity enhances skepticism, distrust, and competition. As they write:

"Ethnic diversity facilitates friction. This friction can increase conflict in some group settings, whether a work team, a community or a region. Conversely, ethnic homogeneity may induce confidence, or instrumental trust, in others' decisions (confidence not necessarily in their benevolence or morality, but in the reasonableness of their decisions, as captured in such everyday statements as “I trust his judgment”).

According to the authors of the PNAS study, this “friction” can produce positive consequences in some contexts and incredibly damaging consequences in others. Specifically:

In modern markets, vigilant skepticism is beneficial; overreliance on others’ decisions is risky…Such friction, however, can cause conflict and complicate collective decisions. The challenge, then, is in establishing rules and institutions to address ethnic diversity and its effects. Without them, conflict can be destructive; with them, diversity can benefit the collective."

In other words, previous research indicates that racial and ethnic diversity might make things much worse in team and non-market settings such as the military.

As this brief review suggests, the authors of TF1N could very easily make a well-supported argument against increasing racial, ethnic, and gender diversity in the Navy using the existing peer-reviewed research and using the PNAS study itself. The point here is not to suggest that they should make this case or that we should be credulous about this handful of studies and dismissive of what the McKinsey and PNAS studies have to say. The point is that “evidence-based” policymaking means that we should attempt to ground our military policy decisions in a critical evaluation of the best available evidence and the best available evidence rarely points in a single direction. Even given the considerable challenges to producing fair evaluations of diversity efforts (described above), an honest and complete engagement with the peer-reviewed literature would inevitably force us to realize that diversity is rarely an unalloyed good. Diversity’s benefits inevitably come with costs, conditions, and caveats. Making the best policy will require taking all of these into account rather than pretending they don’t exist. More generally, if we want to engage with the literature, let's engage with all of it instead of cherry-picking the studies that most closely reflect our priors.

In the next post, I’m going to explore how the TF1N makes a series of indefensible “giant leaps” in attempting to apply these two narrow studies to the entire United States Navy. In the meantime, read Phillip Kuehlen’s incisive critique of TF1N here.